🗞️ A Primer on Generative AI for Telecom

https://arxiv.org/abs/2408.09031

Whenever you see a “phrase of text like this”, it means that phrase is being quoted directly from the paper. I couldn’t find a more reliable way to convey this information, so resorted to quoted italics to do the same.

What is this paper about ?

This paper popped on my feed since I am following Xingqin Lin 1. This paper basically provides a introductory material for anyone to start connecting the dots between AI 2 and Telecom vertical.

At an outset it starts by quoting the fact that AI related research has been long standing within telecom domain, well before the arrival of LLMs in to mix. Table 1 summarizes the 5 different pre-LLM technologies 3 and associated applications within telecom domain.

Next, the paper lists 4 potential usecases of LLMs within telecom domain.

Customer Support : While it starts with the usual boring beaten-to-death example of , the paper also lists 2 concrete real world examples of the same.

amAIz by Amdocs - “a purpose-built GenAI platform for telecom that fine-tunes generic LLMs with telecom-specific data to specialize in customer engagement. The platform utilizes LLMs trained on customer bills, orders, business policies, processes, and historical agent transcripts to provide automated billing service using natural language generation”.

Now Assist by Service Now - an extended service utilizing agent ecosystem, “with features powered by fine-tuned LLMs, to enable use cases like automated service assurance”.

Field Technician Assistance : This is an extension of chat bot usecase, however the target audience here is not an average non-technical user (aka consumers), but field technicians those often technically sound, but often too busy on a work day. The usecase here is for the chat bots to assist “network technicians in the field by promptly diagnosing critical network issues and recommending efficient resolutions by synthesizing large volumes of technical manuals”.

The business impact is ofcourse improved “technician’s efficiency at the cell-site, resulting in faster query resolution, enhanced troubleshooting accuracy, and minimized back-office workload (e.g., reduced frequency of supervisor calls)”.

In addition, this paper also provides a concrete implementation of this usecase with :

- Baioniq by Quantiphi - a “GenAI powered platform “Baioniq” from Quantiphi offers customized LLM-based virtual assistants as copilots for field technician assistance.”

Network Diagnostics, Management, and Planning : This is again a chat bot based usecase, but this time the target audience is neither the end user, nor the field user, but the operator user i.e set of users who plan, design, operate & maintain the networks. The idea here is for LLMs to assimilate all the disparate knowledge sources exists in the form of system logs, telemetry data, historical information etc and act as a oracle of sources 4. Here also, the paper quotes 2 concrete examples to cement this usecase into the reader’s mind.

SQL GPT by Kinetica - “which leverages LLM and vectorized processing to enable telecom professionals to have interactive dialogue with network using natural language and through visualization of datapoints on a map. Furthermore, blending intelligence with auto”.

TwinX by TCS - “digital twin platform harnessing LLM-enabled insights to provide data analytics for network planning.”

Telecom Standards Chatbots : I have already touted this usecase in one of my previous blog entries 5. The idea here is to feed standard specifications like documents & other artifacts to LLMs and make them standards aware. The resulting LLM can be a domain specific model that is more relevant & useful for telecom specific applications.

One of the main benefit of this usecase is “Understanding nitty-gritty details of standards spread across a slew of complex documents and their interrelations can be quite challenging and would require domain expertise. LLM-based GenAI augmented with advanced features like RAG can simplify and streamline access to complex specifications, enhancing collaboration and understanding of industry standards.”

Now under this section is where, the author present one of the significant contribution of this paper.

- ORAN chatbot by nVIDIA - “a RAG enabled LLM platform that provides quick summary of queries related to ORAN standards, all the way from simple, one-liner questions and answers (Q&As) to complex summarization questions requiring consultation from multiple standards documents across various O-RAN working groups.”

| Pre-LLM Technology | Potential Applications within Telecom Domain |

|---|---|

| Variational Autoencoders (VAE) | Model CSI feedback in MIMO systems. |

| Generative Adversarial Networks (GAN) | Generate synthetic channel data to model a wireless channel. |

| Normalizing Flows (NF) | MIMO signal detection within a noise distribution. |

| Diffusion Models (DF) | Model the forward channel corruption to improve error correction at the receiver. |

| Transformer Based Models (TF) | Extract abstract semantic infromation from a semantic communication |

Over the next few sections, the authors of this paper introduces & discusses their design methodology of this chat bot along with some architectural details. Along the way, they also briefly educate the readers about multiple knowledge augmentation techniques like RAG, PEFT Fine tuning etc and land on the RAG techniques to setup the stage for discussing the actual implementation of this chat bot. I will go over much more details in the upcoming key contributions of this paper section.

Lastly, the paper cites some of the future topics such as :

- the need for large, diverse telecom domain datasets for continued research

- the need for multi-modal LLM since telecom knowledge sources are not just text, but also “a multitude of different data modalities such as radio signals and 2D/3D environment data from camera, radar, and LiDAR.”

- a call for standards bodies to effectively way to interop with different GenAI models.

What are the key contributions of this paper ?

Now lets come to the meat of this paper. Apart from citing some of the concrete examples of GenAI usecases in telecom, (which is a great contribution according to me), the most significant contribution in terms of novelty is this : ChatBot trained on O-RAN specifications.

Before getting into the chat bot implementation, the authors touch briefly on the design aspects. They quote 2 aspects namely :

Accelerated Compute : Authors try to convince that GPUs are needed for LLM based applications. This section is predominantly a sales pitch to convince the readers that LLMs for telecom needs to operate data on a massive scale and LLM being a native transformer architecture, needs GPUs (as opposed to other hardware types) for its training and inference.

Information Retrieval and Customization : This section basically argues that RAG and Fine-tuning are pertinent to customize general purpose LLMs to domain specific LMs to make sense for telecom problem space. “However, RAG and fine-tuning are not mutually exclusive technologies, but rather can be used in tandem. When starting to build a telecom application with an LLM, RAG can be firstly applied to quickly improve accuracy. If the application demands even higher accuracy, fine-tuning can be further used to customize the LLM.”

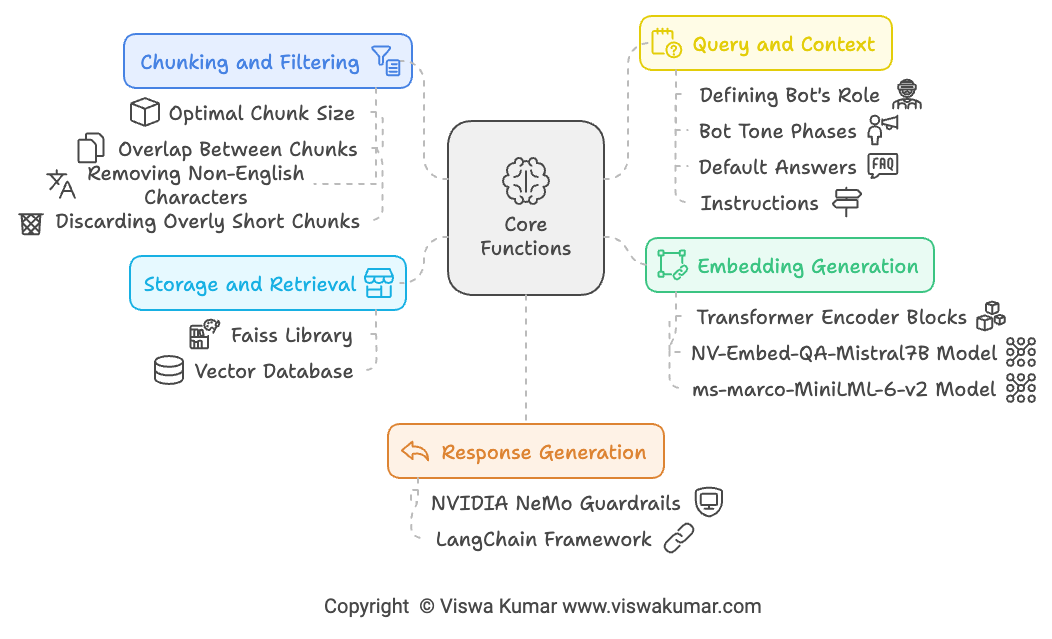

Figure 1 details my summarization of the core steps that went into designing this chatbot.

Document Ingestion and Preprocessing : First the O-RAN specifications were pre-processed by splitting the document into multiple chunks of 500 words with an overlap of 100 words between them. Then those chunks were further normalized by removing non-english characters, line breaks etc and smaller non-meaningful chunks were manually inspected and discarded.

Embedding Generation and Storage : Then these chunks are used to create a embeddings using “NV-Embed-QA-Mistral7B model as the embedding model” using “NVIDIA NeMo retriever” and reranked using “ms-marco-MiniLML-6-v2 model”.

Query and Context Retrieval : In this stage, the prompts used in RAG systems were customized to yield desired quality. The customization went on “the instruction, context, input data & output indicator”.

Response Generation : One of the key contribution in my humble opinion, is this one. In-order to make sure that the responses are factually correct and consistent, the authors “implemented fact-checking using the NVIDIA NeMo Guardrails library. This open-source toolkit facilitates the integration of programmable guardrails into LLM-based conversational systems, enforcing specific output controls that adhere to predefined content restrictions or guidelines”.

The authors then basically conducted multiple tests with following configurations :

Vanilla RAG - Basic RAG as per the books. This configuration yielded poorer results, but still valuable compared to vanilla LLM without RAGing with O-RAN specs.

Hypothetical document embeddings (HyDE) RAG - “HyDE RAG uses an LLM to generate a hypothetical answer to the user query and then performs a similarity search with both the original question and the hypothetical answer, instead of doing the similarity search with just the user’s original query.” - In simpler words, you first ask this question to a different LLM, get some answer. Then use that answer to retrieve relevant documents from the knowledge. Now use these retrieved docs as a context and pass along with the original user query to the final LLM to generate response.

- As per the authors, “This technique outperforms standard retrievers and eliminates the need for custom embedding algorithms, but it can occasionally lead to incorrect results as it is dependent on another LLM for additional context”.

Advanced RAG - Advanced RAG relies on query transformation techniques. Basically the user’s original input query is transformed into multiple sub queries by a different LLM. For each of that sub query, relevant context is retrieved from the knowledge base and passed to final LLM for response generation.

- As per the authors, “The query transformation enables a more thorough and nuanced comprehension of complex topics typically scattered across various documents, enhancing the chatbot’s capability to deliver relevant and accurate information.”

Finally, the authors also discussed how they evaluated the performance of these RAG configurations, by comparing the context precision & context recall metrics by using the Ragas framework 6

What are my key takeaways ?

For me, the key take aways are definitely the concrete examples cited in each category of LLM usecase. If not for this paper, I would certainly be unaware of these progresses. Although the paper doesn’t cite a reference or supporting resources for each of those concrete projects, still a welcoming contribution in my view.

Next the source of the RAG chatbot it self. Although the paper incorrectly cites the reference to the source in github. I was able to figure out the actual source 7 of this chatbot and it is included at the footnote of this blog as well. Especially the usage of guard rails and the fact-check utility 8 is definitely worth checking out.

Also another nice reference that I found while reading this paper is another paper titled “Telco-RAG: Navigating the Challenges of Retrieval Augmented Language Models for Telecommunications” 9. Its on my to-read list. Hopefully I will write about it on papershelf someday. Stay tuned 🤓

I also publish a newsletter where I share my techo adventures in the intersection of Telecom, AI/ML, SW Engineering and Distributed systems. If you like getting my post delivered directly to your inbox whenever I publish, then consider subscribing to my substack.

I pinky promise 🤙🏻 . I won’t sell your emails!

Footnotes

https://www.linkedin.com/in/xingqin-lin-8b86b627/↩︎

especially LLM technology↩︎

aka preliminaries from the paper↩︎

referring to the oracle character in Matrix↩︎

Blogpost : What’s 🥣 cooking in 🦙 LLMs for 📡 Telecom?↩︎

Actual source of this chatbot as the article posted date : oran-chatbot-multimodal↩︎

Telco-RAG arXiv Link↩︎